The French Laboratory for Metrology and Testing (LNE) has just inaugurated its new AI evaluation lab, LEIA Immersion (read our article here). Stéphane Jourdain, Head of the Medical Environment Testing Division at LNE, shared insights on his team’s methods to test AI and the major challenges ahead for LNE to become a trusted authority in the field. He also discussed the new missions set last week by French President Macron to establish a national AI evaluation center. This new entity will require the development of new metrics and evaluation protocols tailored to the most advanced AI models, especially generative ones.

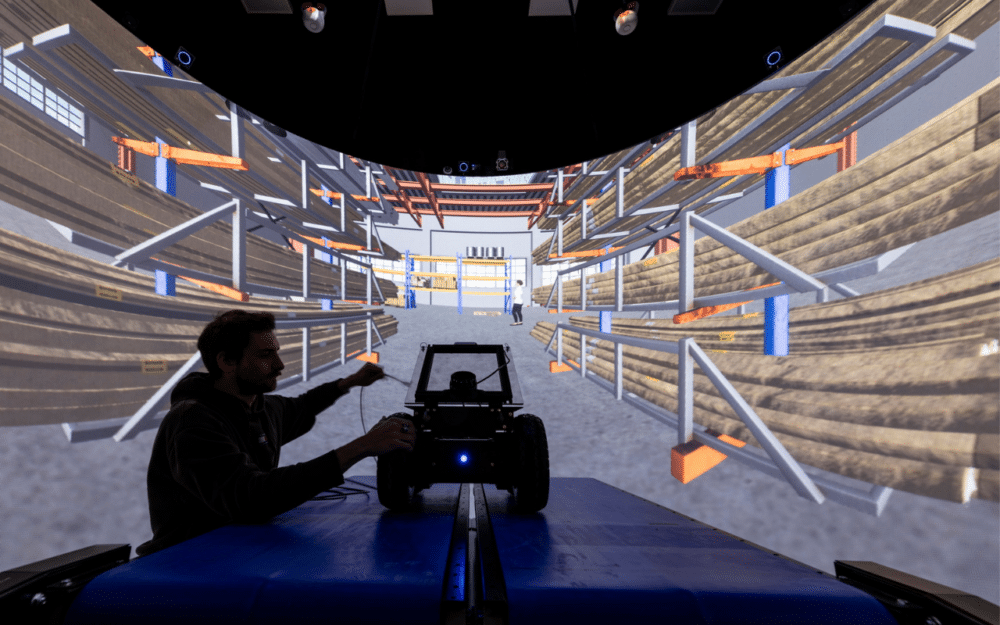

On May 14, the LNE, the French Laboratory for Metrology and Testing, inaugurated the latest addition to its AI system evaluation platform, LEIA Immersion. This lab follows the LEIA Simulation launched in 2023 and aims to advance further the evaluation of AI models, particularly those embedded in robots. The upcoming LEIA Action will test AI models in real-world conditions.

Towards an AI Evaluation Center

Last week, during the Parisian Viva Tech event and as part of the National AI Strategy and France 2030, President Emmanuel Macron made several AI-related announcements, including creating a new AI evaluation center. He stated:

“A profoundly new place, the AI Factory, will host a new AI evaluation center and aims to become one of the world’s largest centers for AI model evaluation, in line with the AI Act.”

Evaluating AI is becoming essential, especially under the AI Act, the European regulation aimed at overseeing AI models within the European territory.

Macron added that he wants this center to host a branch of the European Commission’s AI Office, particularly to provide technical expertise related to model surveillance. It will also connect to major global AI evaluation networks, including the UK’s and the US’s AI Safety Institutes.

The president also announced the organization of the “AI Olympics” to compare the performance of various competing AI solutions and facilitate the rapid maturity of general-use AI technologies in priority applications.

This future Challenge, organized by the LNE and the National Institute for Research in Digital Science and Technology (INRIA), will be the first of its kind in the world for general-use AI. It will provide participants with training and test data, as well as metrics and evaluation protocols tailored to the most advanced AI models, including generative AI.

We interviewed Stéphane Jourdain, Head of the Medical Environment Testing Division at LNE, to delve into the development of evaluation methodologies for both present and future AI systems. Our discussion covered the testing procedures, requirements, challenges, and metrics highlighted by the French President.

RELATED ARTICLE

The LNE develops methods to evaluate AI to be a trusted third party to certify trustworthy AI for consumers across Europe. Introduce us to your team and working methods.

Stéphane Jourdain: “The team consists of doctoral researchers or engineers who are still involved in research, on international projects. Because AI is constantly evolving, we must continuously learn how it operates and how to evaluate it. For example, some AI systems are deployed and then learn over time as they’re used, meaning they evolve. Research projects address such questions. Our teams need to remain up-to-date with the latest advancements, current evaluation methods, data collection, metric implementation, organizing challenges, analyzing results, and developing tools for these tasks.

At LNE, we test our clients’ products, evaluating them from electromagnetic compatibility to climate and waterproofing. The approach is the same for AI. We have been evaluating artificial intelligence since 2008. Our methods follow steps that must be adapted to new applications each time.”

What are these steps?

Stéphane Jourdain: “We define the task that the AI must perform. We collect the data that will be used for testing. It’s a whole process to collect, find, and qualify the data to see how representative they are, ensuring they have no biases. They need to be annotated, meaning someone validates, for example, if it’s about searching for an object in an image, that the object is indeed in the image and is in the right place. Then we feed all of this to the machine and the machine gives us its results. We also need to quantify the result with metrics. And these metrics need to be built.”

How are metrics built for AI?

Stéphane Jourdain: “Certain metrics are well-established, particularly in natural language processing and translation, where metrics such as error rates are commonly used. However, when dealing with images, metrics tend to be more complex. Furthermore, the specific task being performed often requires the definition of particular metrics, which involves working on their definition, calculation, and implementation to assess the results obtained from evaluating the system. In some cases, the machine’s results are compared with human-generated data, and metrics are employed to evaluate the gap between what is considered human truth and the results produced by AI. This process follows a consistent approach.”

However, since 2008, AI has evolved significantly, with systems becoming increasingly complex. The emergence of generative AI adds another layer of complexity. What additional data do you need?

Stéphane Jourdain: “At each stage, we reassess our approach. While the steps remain the same, the methods employed vary. Gathering data depends on the task and application, with some data being more challenging to obtain. For instance, accessing medical data is complex due to patient confidentiality regulations. So, how do we collect such data? Where do we find it? What is the cost associated with acquiring it? Is augmentation necessary, i.e., generating new data from existing sources but enhancing them with additional information? These are genuine concerns. Fortunately, there are specialists in data collection who provide access to databases for a fee. There are also European projects related to AI, often funded by public authorities, making data accessible. However, the downside is that this data may become outdated within six months.”

What types of data are we talking about?

Stéphane Jourdain: “It can be audio recordings, video recordings, text, images, or satellite imagery. It depends on the application. For example, we recently worked on robotic systems to reduce pesticide use. The robots needed to be fed images of what they see when they’re in the crop. So, it involves close-up photos of small patches of land with plants on them.”

How much image data is required to develop a methodology that’s relevant enough to provide a meaningful evaluation?

Stéphane Jourdain: “We need at least thousands of images. However, for certain applications, annotations are necessary. This means that a human, typically an agronomist in the case of agricultural robots, needs to identify, for example, weeds versus desired plants. Consequently, there’s a limit to the number of images we can work with. However, we ensure they are representative and cover as many scenarios as possible.”

Do you rely on the data provided by clients who come to test their AI at LNE to supply you?

Stéphane Jourdain: “Even if a company provides us with data, we approach it critically because it may be biased—either due to supplier choices or underlying intentions. Therefore, we typically acquire new data. We aim to be a trusted third party and thus seek to control the entire process rather than relying solely on provided data. While we are dependent on the system given to us for evaluation, we scrutinize the data and often obtain additional sources.”

Who are the companies that come to LNE to evaluate their AI, and why do they come?

Stéphane Jourdain: “Some companies come to us because it gives them a competitive edge to say they’ve been evaluated by a trusted third party. Others come because they’re looking to purchase AI and want to compare options. This often happens in research projects where multiple labs and developers are pitted against each other. In such cases, the client invests in evaluations to make informed decisions. Ultimately, it could be either the buyer or the seller. In the future, it may be mandated by law.”

What exactly do you test in AI?

Stéphane Jourdain: “The first question we ask is: What task does this AI need to perform? We then test how well it accomplishes that task. There’s also the question of whether the robot is dangerous. It can perform its task well but still pose a danger. These questions are discussed with the client, based on what they want to test. Since there’s no obligation, we can’t insist that they test their AI for safety. Clients typically want to know if their AI is effective and productive. They often overlook potential ethical concerns. Currently, evaluating systems from an ethical or acceptable standpoint is voluntary. It’s primarily a product performance logic. This is why regulations like the AI Act are emerging, especially for high-risk AIs, to address ethical considerations.”

Do you have access to the company’s code to run your tests?

Stéphane Jourdain: “No, when conducting independent evaluations, we can do so entirely as a black box. We have a duty of impartiality, focusing on evaluating the results and performance of the product. However, it’s not about assisting the developer by suggesting how they should proceed. The more information they provide, the better we can assist in diagnosis. For instance, if they have a gray box approach and tell us the steps involved in achieving a certain result, such as processing images, we can provide more precise feedback, pinpointing where issues might arise. However, we won’t dictate specific solutions to them; our role is to analyze and diagnose, not to prescribe solutions.”

Do you certify or only evaluate AI systems?

Stéphane Jourdain: “Everyone aims to reach that point, but currently, we’re not yet engaged in AI metrology. Certification entails adhering to regulations to evaluate the system and conclude whether it meets specified conditions. However, for testing to be certified, it requires access to data, raising regulatory issues, as seen in healthcare. There’s currently no regulatory framework beyond the AI Act, which outlines expectations without prescribing methodologies. Unlike physical product metrology, where testing methods are well-defined by standards, there’s still no equivalent for AI evaluation. Yet.”

The LNE already has a certification, established in 2021, which is the world’s first related to the AI development process.

Stéphane Jourdain: “Yes, it’s a certification that confirms we’ve audited your development process and you’ve adhered to the rules of a good development process. This means that we examine whether the data used to train the client’s system differed from that used for testing. However, this certification doesn’t imply that the AI is effective, safe, or ethical. It’s a starting point but far from sufficient.”

Is it possible to incorporate ethics into evaluations?

Stéphane Jourdain: “Currently, we can integrate ethical criteria. However, these criteria can vary between countries. But, if tomorrow Europe decides that one of the ethical criteria for CV selection is not to discriminate based on country of birth, we’ll incorporate it into the evaluation system. The guidelines will come from policymakers, and then we’ll know how to implement them.”

The LNE consists of two platforms, soon to be three. What are the differences?

Stéphane Jourdain: “We first had the LEIA Simulation platform. This involves assessing an AI system as a simulated model, existing only as a program. We feed it various data and analyze the results it produces. This simulation-based evaluation has been a long-standing practice, requiring server capacity and data storage. This also involves software development to ensure the AI understands the input data and produces results that are comprehensible to us. Additionally, we face the usual questions of determining which metrics to use for performance evaluation.

With LEIA Immersion, we aimed for something more advanced. This platform has a physical, tangible aspect. It’s heavily focused on robotics. The goal is to evaluate AI embedded in mobile, autonomous systems making decisions in simulated environments. We use motion tracking to monitor how robots move within their environment. For instance, if a robot with an arm needs to grasp an object in a projected environment, we can track its movements to ensure it’s moving in the right direction and at the correct speed. We’re also developing various environments for our clients, primarily focusing on health, agri-food, and smart city themes. These environments include hospitals, retirement homes, fields, vineyards, etc.”

LEIA Action aims for deeper exploration.

Stéphane Jourdain: “To draw a comparison, consider France’s vehicle approval body, which conducts standardized tests on tracks to determine market readiness. Similarly, LEIA Action mirrors this approach, placing real embedded AI systems in quasi-real environments. These environments are essentially standardized physical setups. They resemble containers with representative wooden elements simulating various scenarios like stairs, hospital interiors, or factory floors. These setups can vary lighting, and temperature, and simulate different times of day.

However, there are drawbacks. For instance, testing a robot in a nursing home requires replicating the exact environment. Any changes, like altered stairs, may affect evaluations. Hence, LEIA Simulation proves beneficial, offering diverse scenarios, albeit lacking realism. In contrast, LEIA Action offers greater realism but may not cover all scenarios, potentially limiting realism.”

Who are these new platforms for?

Stéphane Jourdain: “These platforms target startups and SMEs, aiming to provide testing capabilities typically beyond their reach. While large automotive companies may afford simulators for autonomous vehicles, smaller entities may lack such resources. Besides, LNE aims to establish an infrastructure that offers data access to companies unable to afford it. Start-ups, for example, need self-evaluation but may lack the financial means to purchase expensive data sets.”

What is the cost for them?

Stéphane Jourdain: “I can’t provide specific figures, but since Europe participates in the funding of these facilities, it’s aimed at making them accessible to these SMEs. So, SMEs will benefit from cheaper rates, typically around 20 to 40% off.”

But are these rates similar to those for your other types of tests?

Stéphane Jourdain: “They’re quite similar. For example, when conducting tests on vibrating pots, which are conventional testing methods widely used worldwide cost a million or more. Now, the LEIA Immersion costs around one and a half million. So, we won’t be much more expensive than a standard test. The significant benefit for start-ups and SMEs is that they will receive a discount throughout their tests which can last three years.”

How will you evaluate generative AI?

Stéphane Jourdain: “Generative AI relies on components that have been around for some time. However, it will likely require methods specifically tailored to generative AI. Yesterday, Emmanuel Macron indicated his intention to create a national evaluation center for AI in France. This is because there is a need for it. Both the LNE and INRIA, with whom we signed a partnership this morning, have an interest in building these standards and testing methods. Naturally, generative AI will be part of the scope we aim to cover. At the LNE, we are already addressing this topic and assembling a dedicated team. However, today it’s up to the entire ecosystem to implement this.”

What will be the missions of this future national evaluation center?

Stéphane Jourdain: “The idea is to develop methods for evaluating AI and ensuring its performance. This will encompass questions about ethics, safety, security, explainability, and environmental impact. The first point is to direct research and innovation, particularly in identifying risks for security and developing methodologies, protocols, and metrics for AI evaluation. The second point involves developing new tests and providing testing infrastructures to companies and administrations. Lastly, organizing a recurrent evaluation campaign of international scope to attest to the performance of general-purpose AI models. This includes the mission of organizing a challenge in generative AI. To put it in a nutshell, the French government aims to systematize these evaluations with indisputable means and methods from trusted third-party experts.”

In terms of timeline, do you have an idea?

Stéphane Jourdain: “There’s the safety summit in February 2025 in France. We need to have made some progress by then on how we’re going to organize this initial evaluation. I think by summer, we should have established the structure with the INRIA.”

There are also other types of initiatives happening in other European countries. But France wants to take a leadership role?

Stéphane Jourdain: “France aims to establish a structure that enables everyone to work together. There is the UK Safety Institute, the US Safety Institute, Korea, and Taiwan. So, it’s not about everyone working on the same subject in parallel. It’s about avoiding wasting time by doing the same thing multiple times. The idea is to divide tasks so that everyone works together concertedly on these subjects. It’s pointless for France, for example, to have different regulations from others.”

If you were to summarize today the major challenges for evaluating, and testing AI, what would it be? Access to data?

Stéphane Jourdain: “Access to data is a logistical challenge but technically, we’ll get there. The real challenge is setting up this regulation and how governance will be established at the European and national levels, and then establishing rules and standards. Other products went through this same journey decades ago. Today, everything is to be built for AI, so that’s where the real challenge lies. Our goal at LNE is to ensure that the testing facilities we develop meet the requirements for testing methods. For example, if tomorrow we’re asked to test a certain type of AI in a specific way, our challenge is to ensure that our testing facilities allow us to conduct these tests in that manner.”