According to an independent firm, the French city of Marseille is the world’s 7th largest Internet hub, thanks to the deployment of several submarine cables and the construction of data centers in the Port of Marseille. The latter is the work of a single European company, Interxion. We were able to visit their sites and meet Fabrice Coquiot, the group’s CEO for France. Among the topics we discussed were the strategic position of Marseille, energy consumption, and the challenges of global warming.

Interxion, recently rebranded to Digital Realty, is the European expert in the physical hosting of computer equipment and machines. They are different from other players in the sector such as OVH, who provide virtual hosting for websites. Interxion has more than 300 data centers around the world and on all continents.

Marseille, the Gateway City

Interxion arrived in Marseille in 2014 and their data centers are now spread over 4 buildings (MRS1, MRS2, MRS3, and the newly released MRS4). Their customers—cloud platforms, digital platforms, telecom operators—benefit from the opening of the Mediterranean sea and the submarine fiber optic cables through which the world’s Internet passes, to position their core calculations and network systems to irrigate Africa, the Middle East, and Asia. Marseille is atypical because it is the only case where a single company, Interxion, owns all the infrastructure.

According to Fabrice Coquiot, CEO of Interxion France,

“Marseille is a global gateway city. It exists because there is a hinterland made up of the European IT heartland (Frankfurt, London, Amsterdam, Paris) and an opening towards Africa, the Middle East, India, and China. Customers from these countries are now coming to Marseille because data aggregation is taking place there.”

Marseille has benefited from a technological breakthrough with the commissioning of two submarine cables (AAE-1 and SEA-ME-WE 5) and the development of data center capacity. This has brought Marseille virtually closer to the rest of the world and has enabled extremely short data distribution times.

“It takes a datum 115 milliseconds to go from Marseille to Singapore, 0.11 seconds. A piece of data travels around the earth in less than 2 seconds.”

From Marseille, it is therefore possible to open services in 42 countries and reach 4.5 billion users. It’s no coincidence that GAFA, cloud-based solutions, and gaming platforms have chosen to locate their equipment in Marseille.

As a result, according to Telegeography, a telecommunications research and consulting firm, with 38 terra bits of data exchanged per second, Marseille is becoming the 7th largest Internet and computer hub in the world in 2022. The Frankfurt hub, the world’s first, is at 150 terra bits per second.

Inside a Server Room

We visited one of Interxion’s data center facilities, MRS2. There are 7 levels of security to access a server. Barriers, badges, fingerprints, one-way locks…everything is done to ensure maximum security. The company’s mission is to ensure business continuity for their customers at all costs.

We entered one of the server rooms, very hot and noisy. Clients can reserve a private room or use half a room. Others request closed cages to obstruct the view of the type of equipment they use. These layers of security are part of the additional services sold by Interxion.

Inside the room are power supply cabinets and air conditioning. An 80 cm false floor allows for the passage of high-voltage power and cold air. The fiber internet passes through the top.

We enter a cold corridor. Each room contains four of them, and it is in this confined space that the racks, the vertical cabinets where the customers’ servers are stored on shelves on top of each other, are located. Interxion does not rent any equipment, only the racks or half racks.

It is colder here than in the rest of the room. And for good reason; this is where the cold air is blown in from below, via the floor, in front of the customers’ equipment. The warm air spits out the back, which explains why it is warmer in the rest of the room. The challenge, we are told, is to maintain equipment temperatures around 26 degrees as recommended by ASHRAE.

Different sensors constantly monitor the room (for hydrometry, humidity, and temperature) and send the data to a building management system.

River Cooling: a Specificity of Marseille

The key challenge in a data center is to cool customer equipment properly to avoid overheating and failure. Interxion data centers generally use the free cooling technique. This technique captures the ambient air outside, usually at night, and thanks to a heat exchanger, blows it into the room.

But in Marseille, new technology has been developed called river cooling.

This technique is based on the recovery of naturally cold water from an old gallery dug at the end of the 19th century. The artificial canal was used to evacuate water from the mines of Gardanne, north of Marseille, to the port. This water, at 15°C all year round, was not being used. The group, therefore, decided to exploit the coldness of this water via heat exchangers and use it to blow it into the rooms.

According to Interxion, river cooling generates 22 megawatts of cold and cools three of the four buildings. During this summer’s heat wave, the needs of the sites were all covered by river cooling, the company assures us.

A Marketplace in the Data Center

We then went to a room run solely by Interxion technicians called Meet Me Room. This room allows customers to meet virtually and represents an important commercial offer for the group, as Fabrice Coquiot explains:

“Our data centers concentrate communities of customers which allows them to interact with partners through these meet me rooms to achieve their digital transformation, improve their services or develop new ones. For example, our customers can connect to a telecom provider or cloud platform in minutes.”

Interxion customers can request a fiber-to-fiber interconnection with another customer and access cloud services, cybersecurity platforms, data distribution platforms, and more, directly from the data center. This saves precious milliseconds of latency and thus improves performance for users. This so-called cross-connect offering is a big market for the group which goes far beyond hosting equipment.

“Our customers need to find value in being hosted with us beyond even the core and primary missions of safety and business continuity. This means we need to have a diversity of customers and segments to enable them to achieve their digital transformation.”

We did a demonstration in the room. The fiber that runs from customer A’s server physically connects to customer B’s server fiber via a patch panel from one of the four meet-me rooms. It only takes a minute.

Construction Challenges

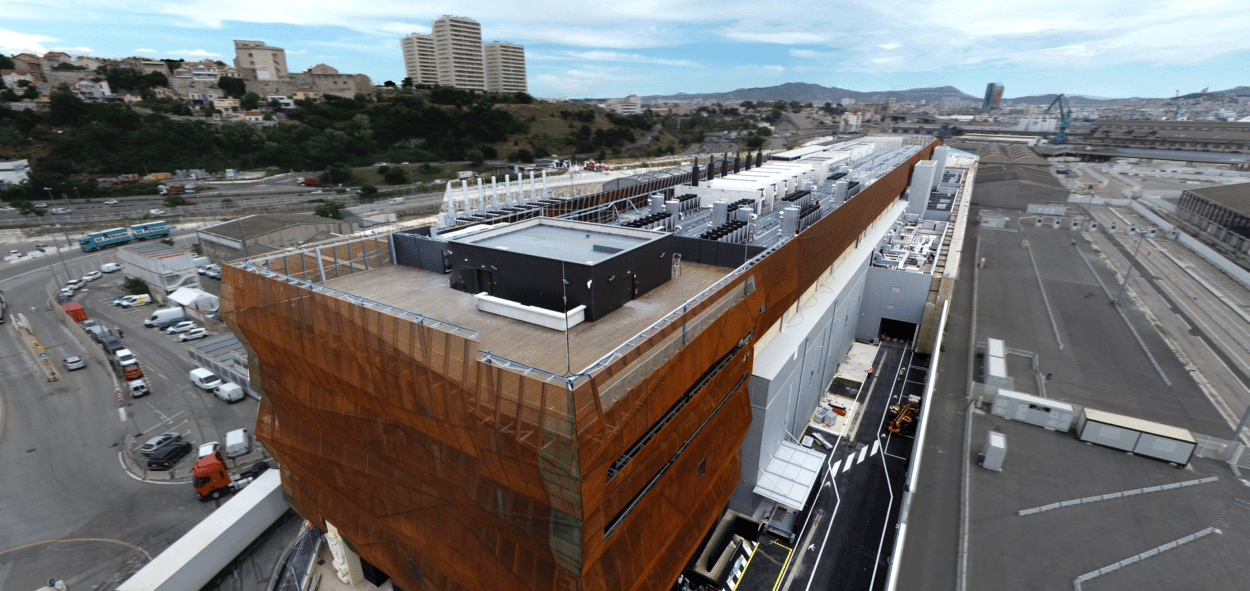

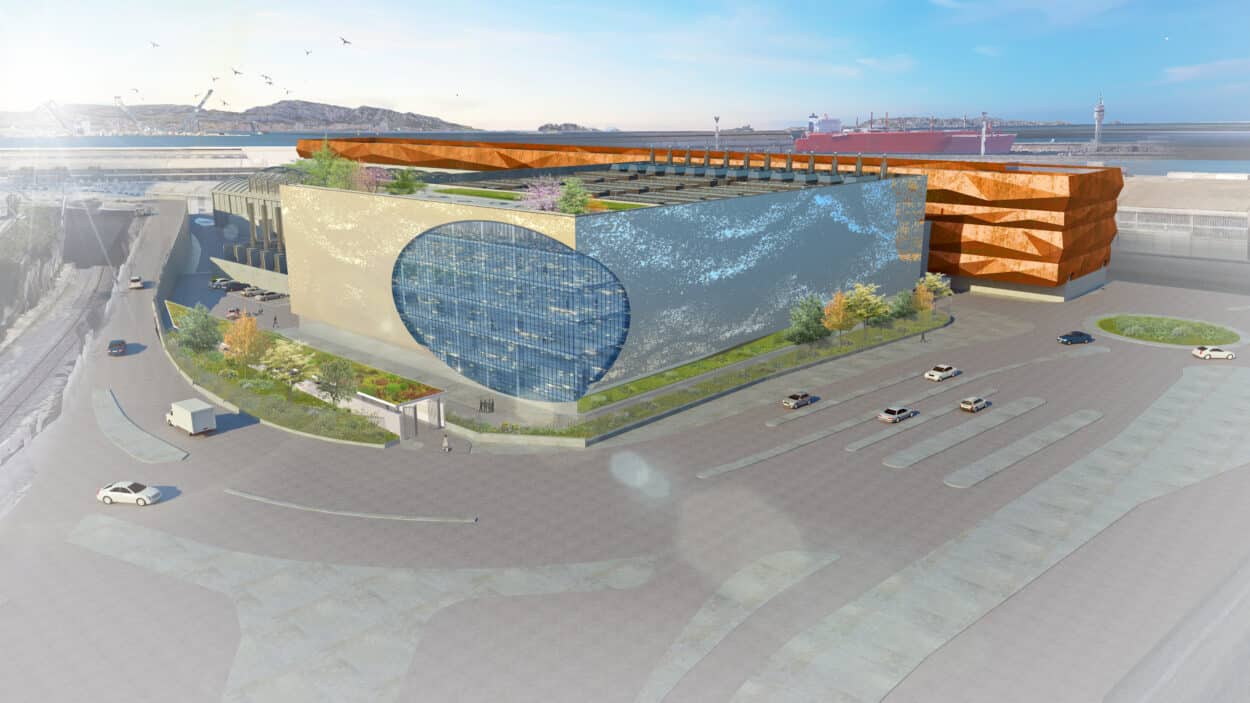

Designing Interxion data center buildings in Marseille was a real challenge because it had to be adapted to the existing building. MRS1 was the telephone company SFR’s building. MRS2 is a former ship repair workshop with protected architecture. MRS3 is a former submarine base built by the Germans during WWII. An architectural effort had to be made for each of the buildings, which is not usually the case for a data center.

Added to this is the particular position of the site, on the waterfront, explains Mr. Coquiot:

“We are exposed to 2 phenomena: the surrounding salinity and microparticles linked to the port’s activity, which is annoying for the air filtering of our installations. We have therefore implemented specific solutions in Marseille, such as special welding of our piping networks inside our buildings. Regarding MRS3, which is very exposed to sea spray, we added a corten structure with self-protection of the steel.”

The Energy Issue

Data centers need a constant supply of electricity to operate and cool the customer equipment. According to various estimations, the annual electrical consumption of data centers is about 500 terawatts per hour. This means that 1% of the world’s electricity is used to power data centers. It could be 3% by 2030. Other sources estimate that the average power supply of a data center is between 30 megawatts per hour and 100 MW, which is the equivalent of the consumption of cities with 25 to 50 thousand inhabitants.

According to Interxion, the power consumption of all their French data centers (13 in total: 9 in Paris and 4 in Marseille) is the equivalent of a city with 53,000 inhabitants.

Reducing energy consumption, therefore, remains the number one challenge for all data centers. Interxion has put in place a number of measures to save energy. For example, when a customer rents a rack but does not use the whole space, Interxion staff install shutter panels in front of the empty shelves to avoid blowing more cold. They are carbon neutral on Scope 1 and 2 (Scope 1: direct emissions from the business. Scope 2: emissions from the business’ electricity consumption). They have even made it a competitive advantage.

“20 to 30% of our customers’ annual bill is made up of energy. For some customers, this means millions of euros. So it’s in their interest to be more efficient. Less than 20% of data centers in France have a PUE (energy consumption indicator) of less than 1.67. In Marseille, we are at 1.11. And Interxion is the only player in France with a neutral Scope 1 and 2. We are erasing the carbon footprint of our customers. That’s why they look for partners like us.”

For this winter, Fabrice Coquiot is not worried about possible power outages because everything is in place, he says, to ensure business continuity. All Interxion data centers are designed to run for 72 hours at full load without any need for the grid thanks to generators.

We saw these backup generators located on the roofs of the buildings. They use HVO synthetic diesel, which emits 90% fewer carbon emissions than conventional fuel oil.

However, to reassure their customers, the group has decided to fill their tanks with 100% fuel oil, which gives them autonomy of 12 to 15 days. They are even currently building an 80-megawatt HVB substation to free themselves from the energy distributor Enedis.

But Fabrice Coquiot is more concerned about the effects of climate change and how data centers will have to adapt to new temperatures.

“When we started building data centers in Europe at the end of the 1990s, we were set on a resilience rate of 35°. For years now, in Paris, we’ve had 42° a few days a year. We had to make retrofits. Today we are set on 45° of global resilience. In the next few years, we could retrofit again. The problem is that we are starting to reach physical limits. But there will be a time when air cooling will no longer be enough.”

This summer, Oracle’s and Google’s data centers in London could not withstand the heat wave. Their cooling systems failed leading to the shutdown of some services and machines.

More and more customers are also making specific requests for very high-density HPC computing. This requires large electrical concentrations with 30 to 40 kilowatts of power per rack. Among the solutions considered is the immersed cooling of servers in a special liquid.

“Will it be 5 years or 10 years from now? In any case, 95% of our data centers are liquid cooling ready. Since we already bring glycosylated water into each of the rooms to put it into the air conditioning compressors to send cold air, we would just have to divert the pipe to put it into a bath, which doesn’t change much.”

How much and in what density is another question. But it is certain that some data center designs will have to change in the near future.

There is also a new threat to all data centers around the world: the attack of underwater cables. The recent sabotage of the Nord Stream gas pipelines in the North Sea in the context of the war in Ukraine has rekindled concerns about the risks of attacking undersea telecommunication cables such as those going to and from Marseille. The recent events are also a reminder of the vulnerability of fiber optic cables which are simply laid on the seabed. They are the foundation of the global Internet and the world’s economy.