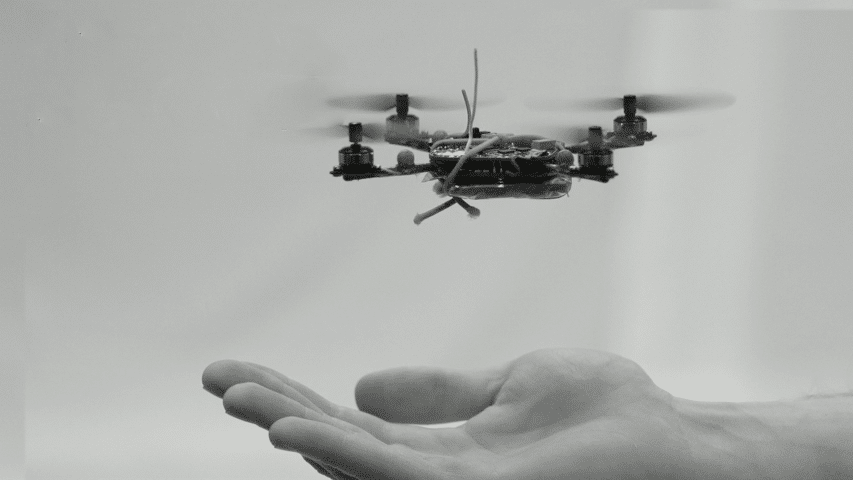

University of Pennsylvania’s GRASP Labs (General Robotics, Automation, Sensing & Perception) will see its leading-edge research on unmanned aerial vehicles (UAVs) reach the market through Exyn Technologies. The IP developed at GRASP includes aerial robots not only generate maps of structures and spaces, but can work in groups without any piloting. They can even pick up and haul heavy objects in groups. These drones are designed to perform high-level command missions for construction, disaster surveys and far more. As a spin-out from GRASP Labs, Exyn is leveraging the IP and plans on completing its first commercial prototype by the end of 2017.

Currently the team at Exyn Tech focuses on fully autonomous navigation of the aerial vehicle so that it will safely fly missions with minimal or indeed no operator control. The 2017 prototype will demonstrate a variety of capabilities for indoor applications.

Exyn’s robot has all necessary components onboard, making it fully independent. For example, the robot doesn’t require the use of a GPS, but instead employs combination of active and passive on-board sensors. These sensor inputs are processed by the company’s proprietary software allows it to sense its environment, construct a map, figure out where it can and can’t fly, establish an optimal path for its mission, and execute the appropriate trajectory – all in real time. Critically, the robot does not require external infrastructure. There’s no need for motion capture cameras, beacons or barcodes distributed throughout the environment.

DirectIndustry e-magazine met chief engineer Jason Derenick in his Philadelphia office in mid-December.

Our research branch focuses on the development of algorithms and core which is tested in a simulation environment. That then goes to our prototype development branch where it is tested in the physical world.

There, the various components are integrated to augment the robot’s overall capabilities.

Each piece that gets put in is an additional autonomous capability. Right now, what we’re working on is the map.

CEO Nader Elm adds:

It’s interesting to see the evolution of the market. Drones have had a lot of press attention dedicated to the consumer product. It’s Christmas right now, so a lot of drones have been sold. That is great and we celebrate the innovation and growth in that segment, but that’s not the market we are targeting.

Piloted drones have shown great potential in the commercial market. Elm explained that in certain contexts, off-the-shelf consumer drones have been put to work inspecting infrastructure such as bridges, utility towers, communications towers and other high-value assets. Most all of today’s applications, however, are outdoors.

What we aim to achieve is the creation of an entirely autonomous aerial vehicle.

The company is building the solution from the ground-up, but is heavily leveraging research conducted under Dr. Vijay Kumar, Dean of the University of Pennsylvania’s School of Engineering and Applied Science. Dr. Kumar’s ground-breaking research is recognized in the robotics community and earned him a role at the White House where he served as the Assistant Director of Robotics and Cyber Physical Systems at the White House Office of Science and Technology Policy (2012-2013). His work has also been showcased at TED with demonstrations of robots performing amazing stunts. Elm added:

The various elements of research, however, is highly focused and is just a sliver of something which relates to autonomy. We have to tightly integrate all the different components and optimize it to run in one vehicle. That in itself is a significant endeavor.

The Exyn Tech team envisions the expansion of UAV swarm applications. If the task required is divisible, multiple cooperating and collaborating robots would be able to execute a mission in a dramatically reduced time. For example, they could perform mapping or search and rescue missions, covering a lot of ground quickly with just one operator. Swarms also could be deployed to rapidly explore and map high-risk buildings.

The Exyn aerial robot is currently capable of sensing and examining its environment, allowing it to establish a map of where it can and cannot fly. But there are exciting advancements in adjacent technology areas.

There’s a lot of active research and development in computer vision and machine learning so that robots can eventually tag and recognize objects for what they are. That could reveal some very exciting capabilities in the future for the robot to adapt their mission profile based on what they are learning while in-flight.