An absolutely essential requirement to making it possible for on-road vehicles to possess a fully autonomous self-driving capability is that they be made aware of everything around them – people, other cars, road layouts – as quickly and easily as a human driver.

RoboSense and the new LiDAR

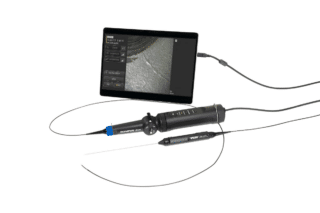

This requires the appropriate sensing technology, which RoboSense believes it has.The China-based company recently introduced its MEMS (MicroElectroMechanical System) solid-state RS-LiDAR-M1 system that it says meets the demand for the appropriate “Level 5” compliance.

According to the company’s vice-president of R&D Dr. LeiLei Shinohara,

“The product range was designed for automotive grade mass production from the start. MEMS avoids complex mechanical spinning technology, instead the MEMS mirror deflects laser beams to scan the environment thereby providing the ability for large volume mass production.”

Dr Shinohara also says that the new version offers:

“[…] a breakthrough on the measurement range limit based on 905nm LiDAR with a detection distance up to 200 metres, as well as an upgraded optical system and signal processing technology to provide a final output point cloud effect which can now clearly recognize even small objects.”

Furthermore, the RS-LiDAR-M1 provides an increased horizontal field of view of 120 degrees so that only five units are needed to ensure there is no blind zone around the car.

Based on a target mass production cost of $200, we are making large volume autonomous driving deployment feasible.

But Dr. Shinohara also says that meeting the safety requirement to enable autonomous driving requires multiple detection systems.

“Conventional technologies such as cameras and radars have their limitations. Cameras do not work well under poor ambient light conditions and radars have limitations detecting an unmoving non-metallic obstacle. These weaknesses can be corrected by lidar, but lidar also has limitations. Therefore lidar, radar and cameras need to be fused together.”

As such, Dr. Shinohara argues, fully autonomous driving “will not be provided quickly.” For him,

“Ensuring the necessary fusion of systems will require a powerful embedded computation platform and a reliable 5G V2x communication system.”

General Motors and the Production-Ready Vehicle

One major car manufacturer gearing up for the actual production of driverless vehicles is US giant General Motors (GM). The company announced last year that it hoped 2019 would see it start commercializing its Cruise AV car which it describes as its “first production-ready vehicle built from the ground up to operate safely on its own with no driver, steering wheel, pedals or manual controls.”

WATCH THEIR VIDEO

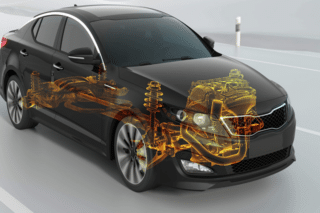

When it is introduced it seems likely the vehicle will find its initial use in urban ride-sharing schemes within geofenced areas. But GM is adamant that developing self-driving vehicles is the greatest engineering challenge of our generation. To achieve this it states that “a safe self-driving capability must seamlessly integrate the self-driving system into the vehicle.”

Information released by GM shows that its self-driving vehicle will make use of a range of sensing technologies including lidars, cameras and multiple types of radars.

Jaguar’s Cars and Pedestrians

Meanwhile, UK-based manufacturer Jaguar Land Rover has been addressing an aspect of driverless vehicles every bit as vital to their success as keeping the occupants safe. That is ensuring the safety of pedestrians as well as their trust in the vehicles.

Jaguar is testing the interaction between people and self-driving “pods” in Coventry. They are using “virtual eyes” and light patterns to signal pedestrians. The “virtual eyes” are electronic devices on the front of the vehicles. They can “look” left or right, indicating the vehicle’s awareness of pedestrians. Light patterns projected on the ground show if the vehicle is accelerating or slowing down. They can tilt sideways during turns.

A spokesman for JLR confirms:

“We fitted ‘virtual eyes’ to the intelligent pods to understand how humans will trust self-driving vehicles, as research studies suggest that as many as 63% of pedestrians worry about how safe it will be to cross the road in the future. Low speed navigation in an urban setting is one of the key areas of development for automated vehicles. It is inevitable that they will share spaces with people who may feel uneasy at the presence of a vehicle being controlled by computers. By gaining a deeper understanding of more comprehensive communication with the pedestrian, over and above conventional indicators and headlights, we can design vehicles that will be more readily accepted by other road users.”